Habits of Mind

Why college students who do serious historical research become independent, analytical thinkers

Journalists and bloggers often complain about humanists, and the common theme is obscurity. Long sentences laced with jargon and theoretical gymnastics. Research topics of interest only to a small enough group of scholars to fit around a seminar table. And “useless.” So useless that the public ought not to subsidize students foolish enough to indulge their fascination with words, symbols, narratives, and paradoxes. Humanists—so the books and blog posts that assess higher education keep telling us—have sold their birthright for a mess of pottage. Once upon a time, humanists taught great texts and raised big questions. Their courses might have lacked a certain specificity, but they had a soul. And nobody worried in those days about whether those courses led to a job.

According to this narrative, in the past half-century or so humanists have tried to become specialists, as if they were scientists—or pretending to be. They have persuaded universities to hire them for their skill at research, not for their ability to captivate and inspire a roomful of students. Then they themselves look for the same qualities in the next generation: when faculty members choose graduate students and appoint junior colleagues, they ask not what positions they take in the Great Debates but how long they can sit still, how much archive dust they can eat, and how many trivial arguments they have intervened in already.

It’s all small scale, all the time: little battles, trench warfare of the mind, defined with mesmerizing precision by generations of articles contesting little bits of ground. That’s what brings in the grants and wins the prizes at annual meetings. That’s what raises university and department ratings (since these ratings depend on what people have heard about the quality of research at other schools). And that’s what keeps generating further little border skirmishes, which can attract new combatants.

To go by this critique, the obsession with the minute and the technical not only determines the course of scholars’ research; worse still, it shapes their teaching. Nowadays specialists can’t teach the survey courses of yesteryear. They haven’t read widely or thought about the big themes of history or literature (which of course was easier back when most ideas that mattered emanated from two continents). Instead they offer seminars focused on tiny questions and single authors and artists. Charismatic in their intellect, these professors seduce the most gifted students into imitating them. The university thus becomes a machine—as the critics endlessly repeat—for producing teachers and students who know more and more about less and less.

Students who come through this routine may be wonderful scholars in the sense that they can skillfully carry out the exercises with which professional historians or literary critics earn their livings. But their journey to the PhD locks them into little boxes, even smaller than those that house their teachers. They know nothing about the boxes to their right and left, to say nothing of those in other rows: it’s like some hideous academic parody of the Matrix.

Students from the old system were prepared to take the values and history and literature they had discussed along with them into the world, and use them as a way to think about their experiences. Students from the new system are unworldly to the point of absurdity. As William Deresiewicz lamented in a memorable article in this publication, they probably know how to talk in foreign languages with highly educated colleagues around the world, but they can’t carry on a conversation with their plumber—much less take an informed position on national politics, or even on the university governance issues that shape their experience as students and will shape their lives if they become professors.

That’s the meme, or set of memes, that’s floating around. And it does describe some professors and some graduate students in some departments at some institutions—enough so that anyone who does a bit of exploration can find egregious examples. But as a general description of the landscape of the humanities in American higher education, it’s about as close to the reality as are the other zombie platitudes about higher ed that stalk the Internet and are repeated in every article—and that no amount of facts seems able to kill. The humanities are dying for lack of students? Not according to the long-term trends—if you take the time to look at all the data, as Benjamin Schmidt of Northeastern University has done, instead of just the data that happen to be accessible online—and not when you factor in the increase in the number and diversity of students. Or what about the notion that humanities graduates face only one choice—become a barista or starve? Not according to recent data that, again, reflect long-term trends rather than the first year or two after graduation.

No. The data show that especially in colleges and universities not considered to be among the elite, humanities majors have, for the last quarter century, been holding steady. And if our students sometimes take a few years to figure out their next steps, that’s not such a bad thing, especially since they do quite well over the long run—even if teachers, artists, and nonprofit professionals don’t earn as much as their contemporaries in the private sector. What’s more, they owe their success in part to college professors who care about teaching, and who introduce students to the magic of research.

So let’s do something unusual in the public discussion: let’s stop talking about “the humanities”—a group of disciplines so varied in their methods and culture that all generalizations about them do violence to the facts on the ground—and talk about one discipline instead. History—the kind of history that we practice and teach at the college level—was born in research, in the excavations in ecclesiastical archives inspired by the Reformation and the opening of political archives in the revolutionary period of the late 18th century and after.

Every time history has been renewed—by the great French social and cultural historians of the Annales school, by the British social historians of the 1960s and later, by historians of slavery and of women, of colonialism and of war—the renewal has come in new ways of doing research and writing about it. Historians call this continuous process revisionism, and we see it as a good thing: a continuing revelation of new facets of past human life and experience.

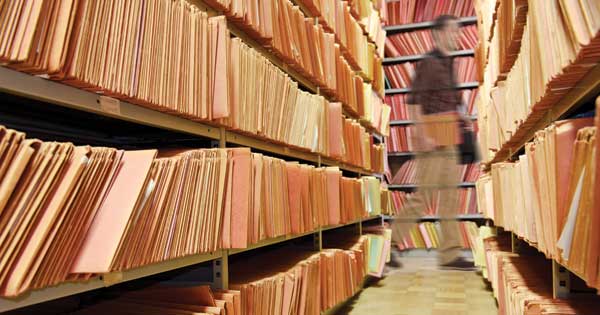

Students of history learn how to do research in institutions of many kinds. In small liberal arts colleges or regional public universities, they might focus on the historical dynamics of local communities and rural or small-town cultures. At urban universities, courses might revolve around visits to archives that bulge with social and political history, or to immigrant neighborhoods that have evolved in more complex ways than the local mythology would have it. We historians assign term papers in our courses, and often require independent work and BA theses of our majors. We don’t just perpetuate these quaint customs. We’re proud of doing so. Listen to a couple of historians sitting over pizza in a restaurant and you’re likely to hear them bragging about research projects that their students have carried out.

Go, for example, to the webpage of the history department at Messiah College, near Harrisburg, Pennsylvania, and you’ll read about students doing every kind of research you can imagine, from working with a museum professional to excavate and restore a historic cemetery, to pursuing the family histories of African Americans in the documents at the Schomburg Center of the New York Public Library, to developing a digital archive on the history of Harrisburg. Go to the webpage of the history department at the University of California, Berkeley and you’ll find a student-edited journal, Clio’s Scroll, showcasing detailed and imaginative historical research—often drawn from senior theses—on subjects such as drama, landscape, and political thought.

Or just go to TeachArchives.org and read about the Students and Faculty in the Archives project of the Brooklyn Historical Society, which brought more than 1,100 undergraduates from three local colleges—St. Francis College, Long Island University, and City Tech—into the archives. They worked in small groups and, in some cases, on summer fellowships. They explored a vast range of sources, among them the journals of one Brooklynite, Gabriel Furman, and images and ephemera illustrating the history of Coney Island. And they produced everything from individual papers to a collaborative exhibit on Furman and his world.

Why do we teach these students—fresh, bright young undergraduates—to do research? Why take people who are forming themselves, who should be thinking about life, death, and the universe, and send them off to an archive full of dusty documents and ask them to tell us something new about the impact of the Civil War in a country town in Pennsylvania or Virginia, or the formation of Anglo-Norman kingship, or the situation of slaves in the Old South?

The answer is so simple that we sometimes forget to give it, but it matters. We teach students to do research because it’s one powerful way to teach them to understand and appreciate the past on its own terms, while at the same time finding meaning in the past that is rooted in the student’s own intellect and perspective. Classrooms and assigned readings are necessary to provide context: everyone needs to have an outline in mind, if only to have something to take apart; and everyone needs to know how to create those outlines and query them constructively. Reading monographs and articles is vital, too. To get past the big, generalized stories, you have to see how professional scholars have formed arguments, debated one another, and refined theories in light of the evidence.

But the most direct and powerful way to grasp the value of historical thinking is through engagement with the archive—or its equivalent in an era when oral history and documentary photography can create new sources, and digital databases can make them available to anyone with a computer. The nature of archives varies as widely as the world itself. They can be collections of documents or data sets, maps or charts, books with marginal notes scrawled in them that let you look over the shoulders of dead readers, or a diary that lets you look over the shoulder of a dead midwife. What matters is that the student develops a question and then identifies the particular archive, the set of sources, where it can be answered.

Why do this? Partly because it’s the only way for a student to get past being a passive consumer and critic and to become a creator, someone who reads other historians in the light of having tried to do what they do. Partly because it’s the way that historians help students master skills that are not specific to history. When students do research, they learn to think through problems, weigh evidence, construct arguments, and then criticize those arguments and strip them down and make them better—and finally to write them up in cogent, forceful prose, using the evidence deftly and economically to make their arguments and push them home.

[adblock-right-01]

The best defense for research, however, is that it’s in the archive where one forms a scholarly self—a self that, when all goes well, is intolerant of weak arguments and loose citation and all other forms of shoddy craftsmanship; a self that doesn’t accept a thesis without asking what assumptions and evidence it rests on; a self that doesn’t have a lot of patience with simpleminded formulas and knows an observation from an opinion and an opinion from an argument.

This self, moreover, is the student’s own construction. Supervision matters: people new to historical work need advice in framing questions, finding sources, and shaping arguments. In the end, though, historical research is always, and should always be, a bungee jump, a leap into space that hasn’t been mapped or measured. The faculty supervisor straps on the harness and sees to the rope. But the student takes the risk and reaps the rewards. This isn’t just student-centered learning, in which the student’s interests are put first; it’s student defined and student executed, the work of a self-reliant, observant, and creative person.

A self like this can seem unworldly, especially if the “real world” resembles a political culture that dismisses complexity and context as “academic.” But in a deeper sense, this is a worldly education, in the traditional way that humanistic education has always embodied. A good humanities education combines training in complex analysis with clear communication skills. Someone who becomes a historian becomes a scholar—not in the sense of choosing a profession, but in the broader meaning of developing the scholarly habits of mind that value evidence, logic, and reflection over ideology, emotion, and reflex. A student of history learns that empathy, rather than sympathy, stands at the heart of understanding not only the past but also the complex present.

That is because in the archive the historian has the opportunity and the obligation to listen. A good historian enters the archive not to prove a hypothesis, not to gather evidence to support a position that assumptions and theories have already formed. But to answer a question. It’s an amazing experience to see and talk with and learn from the dead. As Machiavelli said so well, you ask them questions about what they did and why, and in their humanity, they answer you, and you learn what you can’t learn any other way.

History has many mansions nowadays. Historians work on every period of human history, every continent, and every imaginable form of human life. But they all listen to the dead (and yes, in some cases, the living as well): that’s the common thread that connects every part of history’s elaborate tapestry of methods. All historians muster the best evidence they can to answer their questions. They offer respect and admiration to those who show the greatest ingenuity in raising new questions, bringing new characters onto the stage of history, and finding new evidence with which to do so.

As for talking with people who don’t work in universities—a student who writes a good history paper has learned how to communicate knowledge to anyone. History has been a form of narrative art, as well as of inquiry into the past, since the first millennium BCE, when Jewish and Greek and Chinese writers began to produce it. That’s why Herodotus could read his history of the Persian Wars aloud at the Olympic Games—a performance for which he received the large sum of 10 talents. Historians care about clear speech and vigorous prose, and believe that even complex and technical forms of inquiry into the past can be conveyed in accessible and attractive language. Accordingly, we don’t separate research from writing. Just ask the students whose papers and chapters we send back, adorned with marginalia in blue pen or the colors of Track Changes.

When a student does research in this way—when she attacks a problem that matters to her by identifying and mastering the sources, posing a big question, and answering it in a clear and cogent way, in the company of a trained professional to whom she and her work matter—she’s not becoming a pedant or a producer of useless knowledge. She’s doing what students of the humanities have always done: building a self and a soul and a mind that she can take with her wherever she goes, and that will make her an independent, analytical thinker and a reflective, self-critical person. Isn’t that what we’re supposed to be doing?